【项目012】基于AlexNet的十二生肖分类(演示版)返回首页

作者:欧新宇(Xinyu OU)

当前版本:Release v1.0

开发平台:Paddle 2.3.2

运行环境:Intel Core i7-7700K CPU 4.2GHz, nVidia GeForce GTX 1080 Ti

本教案所涉及的数据集仅用于教学和交流使用,请勿用作商用。

最后更新:2022年10月9日

【实验目的】

- 学会基于Paddle2.3+版实现卷积神经网络

- 学会自己设计AlexNet的类结构,并基于AlexNet模型进行训练、验证和推理

- 学会对模型进行整体准确率测评和单样本预测

- 学会使用logging函数进行日志输出和保存

- 熟练函数化编程方法

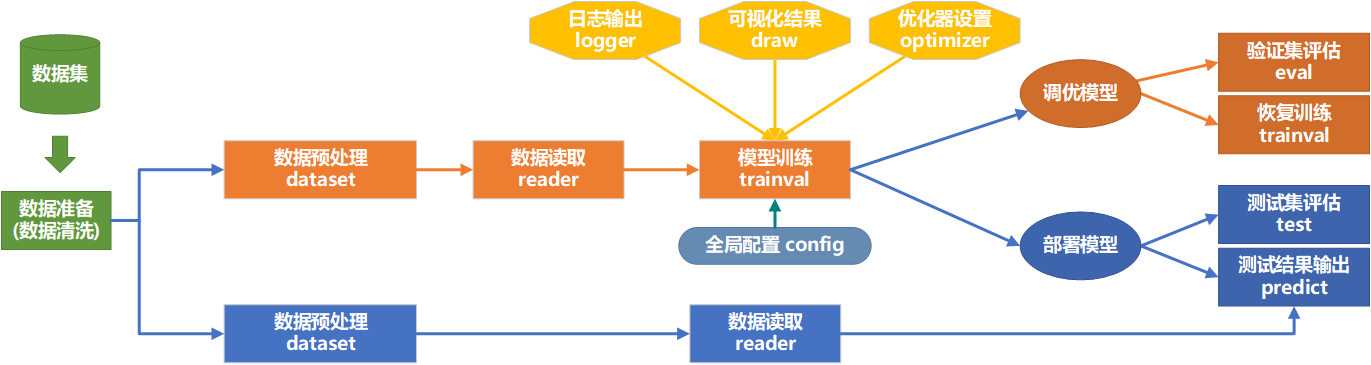

【代码逻辑结构图】

【实验一】 数据集准备

实验摘要: 对于模型训练的任务,需要数据预处理,将数据整理成为适合给模型训练使用的格式。数据集包含12个类别,其中训练集样本7199个, 验证集样本650个, 测试集样本660个, 共计8509个,其中包含9个损坏的文件。

实验目的:

- 学会观察数据集的文件结构,考虑是否需要进行数据清理,包括删除无法读取的样本、处理冗长不合规范的文件命名等

- 能够按照训练集、验证集、训练验证集、测试集四种子集对数据集进行划分,并生成数据列表

- 能够根据数据划分结果和样本的类别,生成包含数据集摘要信息下数据集信息文件

dataset_info.json - 能简单展示和预览数据的基本信息,包括数据量,规模,数据类型和位深度等

1.0 数据清洗

本项目的数据清晰主要解决部分数据损坏的问题,因此采取尝试读读取的方法进行测试,若无法正确读取则判定为损坏图像。

注意:一般来说,数据清晰只需要执行一次,且数据清洗时间较长。

# ################################################################################## # # 数据清洗 # # 作者: Xinyu Ou (http://ouxinyu.cn) # # 数据集名称:十二生肖数据集Zodiac # # 本程序功能: # # 对图像坏样本,进行索引,并保存到bad.txt中,扫描文件夹时,自动跳过文件夹'.DS_Store'和'.ipynb_checkpoints' # ################################################################################### # import os # import cv2 # import codecs # # 本地运行时,需要修改数据集的名称和绝对路径,注意和文件夹名称一致 # dataset_name = 'Zodiac' # dataset_path = 'D:\\Workspace\\ExpDatasets\\' # dataset_root_path = os.path.join(dataset_path, dataset_name) # excluded_folder = ['.DS_Store', '.ipynb_checkpoints'] # 被排除的文件夹 # class_prefix = ['train', 'valid', 'test'] # num_bad = 0 # num_good = 0 # num_folder = 0 # # 检测坏文件列表是否存在,如果存在则先删除。 # bad_list = os.path.join(dataset_root_path, 'bad.txt') # if os.path.exists(bad_list): # os.remove(bad_list) # # 执行数据清洗 # with codecs.open(bad_list, 'a', 'utf-8') as f_bad: # for prefix in class_prefix: # class_name_list = os.listdir(os.path.join(dataset_root_path, prefix)) # for class_name in class_name_list: # if class_name not in excluded_folder: # 跳过排除文件夹 # images = os.listdir(os.path.join(dataset_root_path, prefix, class_name)) # for image in images: # if image not in excluded_folder: # 跳过排除文件夹 # img_path = os.path.join(dataset_root_path, prefix, class_name, image) # try: # 通过尝试读取并显示图像维度来判断样本是否损坏 # img = cv2.imread(img_path, 1) # x = img.shape # num_good += 1 # pass # except: # bad_file = os.path.join(prefix, class_name, image) # f_bad.write("{}\n".format(bad_file)) # num_bad += 1 # num_folder += 1 # print('\r 当前清洗进度:{}/{}'.format(num_folder, 3*len(class_name_list)), end='') # print('数据集清洗完成, 损坏文件{}个, 正常文件{}.'.format(num_bad, num_good))

1.1 生产图像列表及类别标签

################################################################################## # 数据集预处理 # 作者: Xinyu Ou (http://ouxinyu.cn) # 数据集名称:十二生肖数据集Zodiac # 数据集简介: 数据集包含12个类别,其中训练集样本7199个, 验证集样本650个, 测试集样本660个, 共计8509个,其中包含9个损坏的文件。 # 本程序功能: # 1. 将数据集由官方进行划分,大体上训练集、验证集、测试集的比例为: 85:7.5:7.5 # 2. 代码将生成4个列表文件:训练集列表train.txt, 验证集列表val.txt, 测试集列表test.txt, 训练验证集trainval.txt # 3. 数据集基本信息:数据集的基本信息使用json格式进行输出,包括数据库名称、数据样本的数量、类别数以及类别标签。 ################################################################################### import os import cv2 import json import codecs # 初始化参数 num_trainval = 0 num_train = 0 num_val = 0 num_test = 0 class_dim = 0 dataset_info = { 'dataset_name': '', 'num_trainval': -1, 'num_train': -1, 'num_val': -1, 'num_test': -1, 'num_bad': -1, 'class_dim': -1, 'label_dict': {} } # 本地运行时,需要修改数据集的名称和绝对路径,注意和文件夹名称一致 dataset_name = 'Zodiac' dataset_path = 'D:\\Workspace\\ExpDatasets\\' dataset_root_path = os.path.join(dataset_path, dataset_name) class_prefix = ['train', 'valid', 'test'] excluded_folder = ['.DS_Store', '.ipynb_checkpoints'] # 被排除的文件或文件夹 # 定义生成文件的路径 # data_path = os.path.join(dataset_root_path,=) # 该数据的样本分别保存在train,valid和test文件夹中,因此不需要统一指定路径 trainval_list = os.path.join(dataset_root_path, 'trainval.txt') train_list = os.path.join(dataset_root_path, 'train.txt') val_list = os.path.join(dataset_root_path, 'val.txt') test_list = os.path.join(dataset_root_path, 'test.txt') dataset_info_list = os.path.join(dataset_root_path, 'dataset_info.json') # 读取数据清洗获得的坏样本列表 bad_list = os.path.join(dataset_root_path, 'bad.txt') with codecs.open(bad_list, 'r', 'utf-8') as f_bad: bad_file = f_bad.read().splitlines() num_bad = len(bad_file) # 检测数据集列表是否存在,如果存在则先删除。其中测试集列表是一次写入,因此可以通过'w'参数进行覆盖写入,而不用进行手动删除。 if os.path.exists(trainval_list): os.remove(trainval_list) if os.path.exists(train_list): os.remove(train_list) if os.path.exists(val_list): os.remove(val_list) if os.path.exists(test_list): os.remove(test_list) # 获取类别的名称,因为train,valid,test的类别是相同的,因此只需要从train中获取即可 class_name_list = os.listdir(os.path.join(dataset_root_path, 'train')) # 分别从train,valid和test文件夹中去索引图像,并写入列表文件中 with codecs.open(trainval_list, 'a', 'utf-8') as f_trainval: with codecs.open(train_list, 'a', 'utf-8') as f_train: with codecs.open(val_list, 'a', 'utf-8') as f_val: with codecs.open(test_list, 'a', 'utf-8') as f_test: for prefix in class_prefix: class_name_dir = os.listdir(os.path.join(dataset_root_path, prefix)) for i in range(len(class_name_list)): class_name = class_name_list[i] dataset_info['label_dict'][i] = class_name_list[i] images = os.listdir(os.path.join(dataset_root_path, prefix, class_name)) for image in images: if image not in excluded_folder and os.path.join(prefix, class_name, image) not in bad_file: # 判断文件是否是坏样本 if prefix == 'train': f_train.write("{}\t{}\n".format(os.path.join(dataset_root_path, prefix, class_name, image), str(i))) f_trainval.write("{}\t{}\n".format(os.path.join(dataset_root_path, prefix, class_name, image), str(i))) num_train += 1 num_trainval += 1 elif prefix == 'valid': f_val.write("{}\t{}\n".format(os.path.join(dataset_root_path, prefix, class_name, image), str(i))) f_trainval.write("{}\t{}\n".format(os.path.join(dataset_root_path, prefix, class_name, image), str(i))) num_val += 1 num_trainval += 1 elif prefix == 'test': f_test.write("{}\t{}\n".format(os.path.join(dataset_root_path, prefix, class_name, image), str(i))) num_test += 1 # 将数据集信息保存到json文件中供训练时使用 dataset_info['dataset_name'] = dataset_name dataset_info['num_trainval'] = num_trainval dataset_info['num_train'] = num_train dataset_info['num_val'] = num_val dataset_info['num_test'] = num_test dataset_info['num_bad'] = num_bad dataset_info['class_dim'] = len(class_name_list) # 输出数据集信息json和统计情况 with codecs.open(dataset_info_list, 'w', encoding='utf-8') as f_dataset_info: json.dump(dataset_info, f_dataset_info, ensure_ascii=False, indent=4, separators=(',', ':')) # 格式化字典格式的参数列表 print("图像列表已生成, 其中训练验证集样本{},训练集样本{}个, 验证集样本{}个, 测试集样本{}个, 共计{}个; 损坏文件{}个。".format(num_trainval, num_train, num_val, num_test, num_train+num_val+num_test, num_bad)) display(dataset_info) # 展示数据集列表信息

图像列表已生成, 其中训练验证集样本7840,训练集样本7190个, 验证集样本650个, 测试集样本660个, 共计8500个; 损坏文件9个。

{'dataset_name': 'Zodiac',

'num_trainval': 7840,

'num_train': 7190,

'num_val': 650,

'num_test': 660,

'num_bad': 9,

'class_dim': 12,

'label_dict': {0: 'dog',

1: 'dragon',

2: 'goat',

3: 'horse',

4: 'monkey',

5: 'ox',

6: 'pig',

7: 'rabbit',

8: 'ratt',

9: 'rooster',

10: 'snake',

11: 'tiger'}}

【实验二】 全局参数设置及数据基本处理

实验摘要: 十二生肖识别是一个多分类问题,我们通过卷积神经网络来完成。这部分通过PaddlePaddle手动构造一个Alexnet卷积神经的网络来实现识别功能。本实验主要实现训练前的一些准备工作,包括:全局参数定义,数据集载入,数据预处理,可视化函数定义,日志输出函数定义。

实验目的:

- 学会使用配置文件定义全局参数

- 学会设置和载入数据集

- 学会对输入样本进行基本的预处理

- 学会定义可视化函数,可视化训练过程,同时输出可视化结果图和数据

- 学会使用logging定义日志输出函数,用于训练过程中的日志保持

2.1 全局参数设置

#################导入依赖库################################################## import os import json import codecs import numpy as np import time # 载入time时间库,用于计算训练时间 import paddle import matplotlib.pyplot as plt # 载入python的第三方图像处理库 from pprint import pprint sys.path.append(r'D:\WorkSpace\DeepLearning\WebsiteV2\Notebook\Projects\utils') from getSystemInfo import getSystemInfo ################全局参数配置################################################### #### 1. 训练超参数定义 train_parameters = { # Q1. 完成下列未完成的参数的配置 # [Your codes 1] 'project_name': 'Project012AlexNetZodiac', 'dataset_name': 'Zodiac', 'architecture': 'Alexnet', 'training_data': 'train', 'starting_time': time.strftime("%Y%m%d%H%M", time.localtime()), # 全局启动时间 'input_size': [3, 227, 227], # 输入样本的尺度 'mean_value': [0.485, 0.456, 0.406], # Imagenet均值 'std_value': [0.229, 0.224, 0.225], # Imagenet标准差 'num_trainval': -1, 'num_train': -1, 'num_val': -1, 'num_test': -1, 'class_dim': -1, 'label_dict': {}, 'total_epoch': 10, # 总迭代次数, 代码调试好后考虑 'batch_size': 64, # 设置每个批次的数据大小,同时对训练提供器和测试 'log_interval': 10, # 设置训练过程中,每隔多少个batch显示一次 'eval_interval': 1, # 设置每个多少个epoch测试一次 'dataset_root_path': 'D:\\Workspace\\ExpDatasets\\', 'result_root_path': 'D:\\Workspace\\ExpResults\\', 'deployment_root_path': 'D:\\Workspace\\ExpDeployments\\', 'useGPU': True, # True | Flase 'learning_strategy': { # 学习率和优化器相关参数 'optimizer_strategy': 'Momentum', # 优化器:Momentum, RMS, SGD, Adam 'learning_rate_strategy': 'CosineAnnealingDecay', # 学习率策略: 固定fixed, 分段衰减PiecewiseDecay, 余弦退火CosineAnnealingDecay, 指数ExponentialDecay, 多项式PolynomialDecay 'learning_rate': 0.001, # 固定学习率|起始学习率 'momentum': 0.9, # 动量 'Piecewise_boundaries': [60, 80, 90], # 分段衰减:变换边界,每当运行到epoch时调整一次 'Piecewise_values': [0.01, 0.001, 0.0001, 0.00001], # 分段衰减:步进学习率,每次调节的具体值 'Exponential_gamma': 0.9, # 指数衰减:衰减指数 'Polynomial_decay_steps': 10, # 多项式衰减:衰减周期,每个多少个epoch衰减一次 'verbose': True # 是否显示学习率变化日志 True|Fasle }, 'augmentation_strategy': { 'withAugmentation': True, # 数据扩展相关参数 'augmentation_prob': 0.5, # 设置数据增广的概率 'rotate_angle': 15, # 随机旋转的角度 'Hflip_prob': 0.5, # 随机翻转的概率 'brightness': 0.4, 'contrast': 0.4, 'saturation': 0.4, 'hue': 0.4, }, } #### 2. 设置简化参数名 args = train_parameters argsAS = args['augmentation_strategy'] argsLS = train_parameters['learning_strategy'] model_name = args['dataset_name'] + '_' + args['architecture'] #### 3. 定义设备工作模式 [GPU|CPU] # 定义使用CPU还是GPU,使用CPU时use_cuda = False,使用GPU时use_cuda = True def init_device(useGPU=args['useGPU']): paddle.device.set_device('gpu:0') if useGPU else paddle.device.set_device('cpu') init_device() #### 4.定义各种路径:模型、训练、日志结果图 # 4.1 数据集路径 dataset_root_path = os.path.join(args['dataset_root_path'], args['dataset_name']) json_dataset_info = os.path.join(dataset_root_path, 'dataset_info.json') # 4.2 训练过程涉及的相关路径 result_root_path = os.path.join(args['result_root_path'], args['project_name']) checkpoint_models_path = os.path.join(result_root_path, 'checkpoint_models') # 迭代训练模型保存路径 final_figures_path = os.path.join(result_root_path, 'final_figures') # 训练过程曲线图 final_models_path = os.path.join(result_root_path, 'final_models') # 最终用于部署和推理的模型 logs_path = os.path.join(result_root_path, 'logs') # 训练过程日志 # 4.3 checkpoint_ 路径用于定义恢复训练所用的模型保存 # checkpoint_path = os.path.join(result_path, model_name + '_final') # checkpoint_model = os.path.join(args['result_root_path'], model_name + '_' + args['checkpoint_time'], 'checkpoint_models', args['checkpoint_model']) # 4.4 验证和测试时的相关路径(文件) deployment_root_path = os.path.join(args['deployment_root_path'], args['project_name']) deployment_checkpoint_path = os.path.join(deployment_root_path, 'checkpoint_models', model_name + '_final') deployment_final_models_path = os.path.join(deployment_root_path, 'final_models', model_name + '_final') deployment_final_figures_path = os.path.join(deployment_root_path, 'final_figures') deployment_logs_path = os.path.join(deployment_root_path, 'logs') # 4.5 初始化结果目录 def init_result_path(): if not os.path.exists(final_models_path): os.makedirs(final_models_path) if not os.path.exists(final_figures_path): os.makedirs(final_figures_path) if not os.path.exists(logs_path): os.makedirs(logs_path) if not os.path.exists(checkpoint_models_path): os.makedirs(checkpoint_models_path) init_result_path() #### 5. 初始化参数 def init_train_parameters(): dataset_info = json.loads(open(json_dataset_info, 'r', encoding='utf-8').read()) train_parameters['num_trainval'] = dataset_info['num_trainval'] train_parameters['num_train'] = dataset_info['num_train'] train_parameters['num_val'] = dataset_info['num_val'] train_parameters['num_test'] = dataset_info['num_test'] train_parameters['class_dim'] = dataset_info['class_dim'] train_parameters['label_dict'] = dataset_info['label_dict'] init_train_parameters() ############################################################################################## # 输出训练参数 train_parameters # if __name__ == '__main__': # pprint(args)

2.2 数据集定义及数据预处理

2.2.1 数据集定义

import os import sys import cv2 import numpy as np import paddle import paddle.vision.transforms as T from paddle.io import DataLoader input_size = (args['input_size'][1], args['input_size'][2]) # 1. 数据集的定义 class ZodiacDataset(paddle.io.Dataset): def __init__(self, dataset_root_path, mode='test', withAugmentation=argsAS['withAugmentation']): assert mode in ['train', 'val', 'test', 'trainval'] self.data = [] self.withAugmentation = withAugmentation with open(os.path.join(dataset_root_path, mode+'.txt')) as f: for line in f.readlines(): info = line.strip().split('\t') image_path = os.path.join(dataset_root_path, 'Data', info[0].strip()) if len(info) == 2: self.data.append([image_path, info[1].strip()]) elif len(info) == 1: self.data.append([image_path, -1]) prob = np.random.random() if mode in ['train', 'trainval'] and prob >= argsAS['augmentation_prob']: self.transforms = T.Compose([ T.RandomResizedCrop(input_size), T.RandomHorizontalFlip(argsAS['Hflip_prob']), T.RandomRotation(argsAS['rotate_angle']), T.ColorJitter(brightness=argsAS['brightness'], contrast=argsAS['contrast'], saturation=argsAS['saturation'], hue=argsAS['hue']), T.ToTensor(), T.Normalize(mean=args['mean_value'], std=args['std_value']) ]) else: # mode in ['val', 'test'] or mode in ['train', 'trainval'] and prob < argsAS['augmentation_prob']: self.transforms = T.Compose([ T.Resize(input_size), T.ToTensor(), T.Normalize(mean=args['mean_value'], std=args['std_value']) ]) # 根据索引获取单个样本 def __getitem__(self, index): image_path, label = self.data[index] image = cv2.imread(image_path, 1) # 使用cv2进行数据读取可以强制将的图像转化为彩色模式,其中0为灰度模式,1为彩色模式 if self.withAugmentation == True: image = self.transforms(image) label = np.array(label, dtype='int64') return image, label # 获取样本总数 def __len__(self): return len(self.data) ############################################################### # 测试输入数据类:分别输出进行预处理和未进行预处理的数据形态和例图 if __name__ == "__main__": import random # 1. 载入数据 dataset_val_withoutAugmentation = ZodiacDataset(dataset_root_path, mode='val', withAugmentation=False) id = random.randrange(0, len(dataset_val_withoutAugmentation)) img1 = dataset_val_withoutAugmentation[id][0] dataset_val_withAugmentation = ZodiacDataset(dataset_root_path, mode='val') img2 = dataset_val_withAugmentation[id][0] print('第{}个验证集样本,\n 数据预处理前的形态为:{},\n 数据预处理后的数据形态为: {}'.format(id, img1.shape, img2.shape))

第487个验证集样本,

数据预处理前的形态为:(200, 200, 3),

数据预处理后的数据形态为: [3, 227, 227]

2.3.2 定义数据迭代器

import os import sys from paddle.io import DataLoader # 1. 从数据集库中获取数据 dataset_trainval = ZodiacDataset(dataset_root_path, mode='trainval') dataset_train = ZodiacDataset(dataset_root_path, mode='train') dataset_val = ZodiacDataset(dataset_root_path, mode='val') dataset_test = ZodiacDataset(dataset_root_path, mode='test') # 2. 创建读取器 trainval_reader = DataLoader(dataset_trainval, batch_size=args['batch_size'], shuffle=True, drop_last=True) train_reader = DataLoader(dataset_train, batch_size=args['batch_size'], shuffle=True, drop_last=True) val_reader = DataLoader(dataset_val, batch_size=args['batch_size'], shuffle=False, drop_last=False) test_reader = DataLoader(dataset_test, batch_size=args['batch_size'], shuffle=False, drop_last=False) ###################################################################################### # 测试读取器 if __name__ == "__main__": for i, (image, label) in enumerate(val_reader()): if i == 2: break print('验证集batch_{}的图像形态:{}, 标签形态:{}'.format(i, image.shape, label.shape))

验证集batch_0的图像形态:[64, 3, 227, 227], 标签形态:[64]

验证集batch_1的图像形态:[64, 3, 227, 227], 标签形态:[64]

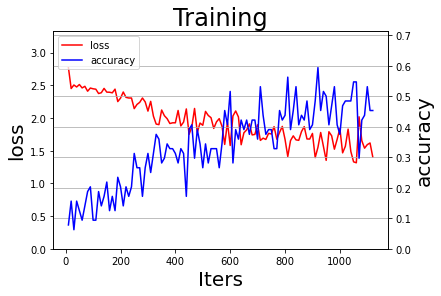

2.3 定义过程可视化函数

sys.path.append(r'D:\WorkSpace\DeepLearning\WebsiteV2\Notebook\Projects') # 定义模块保存位置 from utils.getVisualization import draw_process # 导入日志模块 ###################################################################################### # 测试可视化函数 if __name__ == '__main__': try: train_log = np.load(os.path.join(final_figures_path, 'train.npy')) print('训练数据可视化结果:') draw_process('Training', 'loss', 'accuracy', iters=train_log[0], losses=train_log[1], accuracies=train_log[2], final_figures_path=final_figures_path, figurename='train', isShow=True) except: print('以下图例为测试数据。') draw_process('Training', 'loss', 'accuracy', figurename='default', isShow=True)

以下图例为测试数据。

2.4 定义日志输出函数

sys.path.append(r'D:\WorkSpace\DeepLearning\WebsiteV2\Notebook\Projects') from utils.getLogging import init_log_config logger = init_log_config(logs_path=logs_path, model_name=model_name) ###################################################################################### # 测试日志输出 if __name__ == '__main__': system_info = json.dumps(getSystemInfo(), indent=4, ensure_ascii=False, sort_keys=False, separators=(',', ':')) logger.info('系统基本信息:') logger.info(system_info)

INFO 2022-10-09 22:35:29,081 3700536747.py:10] 系统基本信息:

INFO 2022-10-09 22:35:29,082 3700536747.py:11] {

"操作系统":"Windows-10-10.0.22000-SP0",

"CPU":"Intel(R) Core(TM) i7-7700K CPU @ 4.20GHz",

"内存":"8.97G/15.96G (56.20%)",

"GPU":"b'GeForce GTX 1080 Ti' 2.35G/11.00G (0.21%)",

"CUDA":"7.6.5",

"cuDNN":"7.6.5",

"Paddle":"2.3.2"

}

【实验三】 模型训练与评估

实验摘要: 十二生肖分类是一个多分类问题,我们通过卷积神经网络来完成。这部分通过PaddlePaddle手动构造一个Alexnet卷积神经的网络来实现识别功能,最后一层采用Softmax激活函数完成分类任务。

实验目的:

- 掌握卷积神经网络的构建和基本原理

- 深刻理解训练集、验证集、训练验证集及测试集在模型训练中的作用

- 学会按照网络拓扑结构图定义神经网络类 (Paddle 2.0+)

- 学会在线测试和离线测试两种测试方法

- 学会定义多种优化方法,并在全局参数中进行定义选择

3.1 配置网络

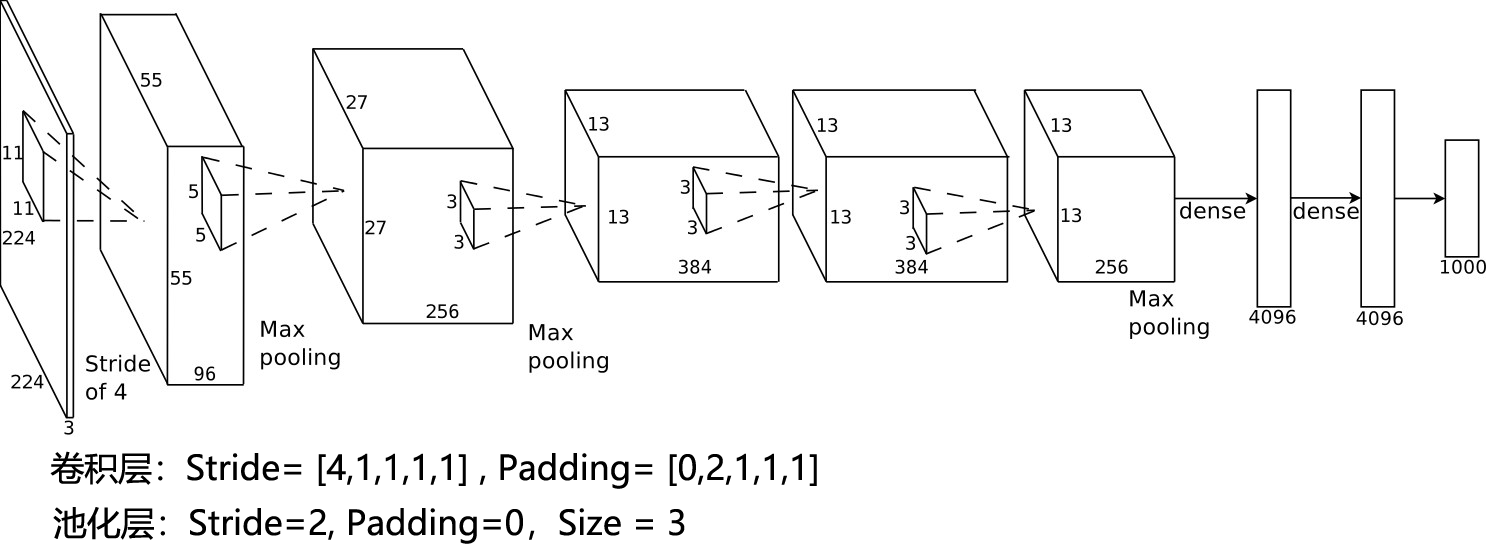

3.1.1 网络拓扑结构图

需要注意的是,在Alexnet的原版论文中,尺度会被Crop为227×227×3,但在后面很多框架的实现中,该尺度被统一到了224×224×3

3.1.2 网络参数配置表

| Layer | Input | Kernels_num | Kernels_size | Stride | Padding | PoolingType | Output | Parameters |

|---|---|---|---|---|---|---|---|---|

| Input | 3×227×227 | |||||||

| Conv1 | 3×227×227 | 96 | 3×11×11 | 4 | 0 | 96×55×55 | (3×11×11+1)×96=34944 | |

| Pool1 | 96×55×55 | 96 | 96×3×3 | 2 | 0 | max | 96×27×27 | 0 |

| Conv2 | 96×27×27 | 256 | 96×5×5 | 1 | 2 | 256×27×27 | (96×5×5+1)×256=614656 | |

| Pool2 | 256×27×27 | 256 | 256×3×3 | 2 | 0 | max | 256×13×13 | 0 |

| Conv3 | 256×13×13 | 384 | 256×3×3 | 1 | 1 | 384×13×13 | (256×3×3+1)×384=885120 | |

| Conv4 | 384×13×13 | 384 | 384×3×3 | 1 | 1 | 384×13×13 | (384×3×3+1)×384=1327488 | |

| Conv5 | 384×13×13 | 256 | 384×3×3 | 1 | 1 | 256×13×13 | (384×3×3+1)×256=884992 | |

| Pool5 | 256×13×13 | 256 | 256×3×3 | 2 | 0 | max | 256×6×6 | 0 |

| FC6 | (256×6×6)×1 | 4096×1 | (9216+1)×4096=37752832 | |||||

| FC7 | 4096×1 | 4096×1 | (4096+1)×4096=16781312 | |||||

| FC8 | 4096×1 | 1000×1 | (4096+1)×1000=4097000 | |||||

| Output | 1000×1 | |||||||

| Total = 62378344 |

其中卷积层参数:3747200,占总参数的6%。

import paddle import paddle.nn as nn class Alexnet(nn.Layer): def __init__(self, num_classes=1000): super(Alexnet, self).__init__() self.num_classes = num_classes self.features = nn.Sequential( nn.Conv2D(in_channels=3, out_channels=96, kernel_size=11, stride=4, padding=0), nn.ReLU(), nn.MaxPool2D(kernel_size=3, stride=2), nn.Conv2D(in_channels=96, out_channels=256, kernel_size=5, stride=1, padding=2), nn.ReLU(), nn.MaxPool2D(kernel_size=3, stride=2), nn.Conv2D(in_channels=256, out_channels=384, kernel_size=3, stride=1, padding=1), nn.ReLU(), nn.Conv2D(in_channels=384, out_channels=384, kernel_size=3, stride=1, padding=1), nn.ReLU(), nn.Conv2D(in_channels=384, out_channels=256, kernel_size=3, stride=1, padding=1), nn.ReLU(), nn.MaxPool2D(kernel_size=3, stride=2), ) self.fc = nn.Sequential( nn.Linear(in_features=256*6*6, out_features=4096), nn.ReLU(), nn.Dropout(), nn.Linear(in_features=4096, out_features=4096), nn.ReLU(), nn.Dropout(), nn.Linear(in_features=4096, out_features=num_classes), ) def forward(self, inputs): x = self.features(inputs) x = paddle.flatten(x, 1) x = self.fc(x) return x #### 网络测试 if __name__ == '__main__': model = Alexnet() paddle.summary(model, (1,3,227,227))

---------------------------------------------------------------------------

Layer (type) Input Shape Output Shape Param #

===========================================================================

Conv2D-1 [[1, 3, 227, 227]] [1, 96, 55, 55] 34,944

ReLU-1 [[1, 96, 55, 55]] [1, 96, 55, 55] 0

MaxPool2D-1 [[1, 96, 55, 55]] [1, 96, 27, 27] 0

Conv2D-2 [[1, 96, 27, 27]] [1, 256, 27, 27] 614,656

ReLU-2 [[1, 256, 27, 27]] [1, 256, 27, 27] 0

MaxPool2D-2 [[1, 256, 27, 27]] [1, 256, 13, 13] 0

Conv2D-3 [[1, 256, 13, 13]] [1, 384, 13, 13] 885,120

ReLU-3 [[1, 384, 13, 13]] [1, 384, 13, 13] 0

Conv2D-4 [[1, 384, 13, 13]] [1, 384, 13, 13] 1,327,488

ReLU-4 [[1, 384, 13, 13]] [1, 384, 13, 13] 0

Conv2D-5 [[1, 384, 13, 13]] [1, 256, 13, 13] 884,992

ReLU-5 [[1, 256, 13, 13]] [1, 256, 13, 13] 0

MaxPool2D-3 [[1, 256, 13, 13]] [1, 256, 6, 6] 0

Linear-1 [[1, 9216]] [1, 4096] 37,752,832

ReLU-6 [[1, 4096]] [1, 4096] 0

Dropout-1 [[1, 4096]] [1, 4096] 0

Linear-2 [[1, 4096]] [1, 4096] 16,781,312

ReLU-7 [[1, 4096]] [1, 4096] 0

Dropout-2 [[1, 4096]] [1, 4096] 0

Linear-3 [[1, 4096]] [1, 1000] 4,097,000

===========================================================================

Total params: 62,378,344

Trainable params: 62,378,344

Non-trainable params: 0

---------------------------------------------------------------------------

Input size (MB): 0.59

Forward/backward pass size (MB): 11.05

Params size (MB): 237.95

Estimated Total Size (MB): 249.59

---------------------------------------------------------------------------

3.2 定义优化方法

import paddle sys.path.append(r'D:\WorkSpace\DeepLearning\WebsiteV2\Notebook\Projects') from utils.getOptimizer import learning_rate_setting, optimizer_setting # 5. 学习率输出测试 if __name__ == '__main__': # print('当前学习率策略为: {} + {}'.format(argsLS['optimizer_strategy'], argsLS['learning_rate_strategy'])) linear = paddle.nn.Linear(10, 10) lr = learning_rate_setting(args=args, argsO=argsLS) optimizer = optimizer_setting(linear, lr, argsO=argsLS) if argsLS['optimizer_strategy'] == 'fixed': print('learning = {}'.format(argsO['learning_rate'])) else: for epoch in range(10): for batch_id in range(10): x = paddle.uniform([10, 10]) out = linear(x) loss = paddle.mean(out) loss.backward() optimizer.step() optimizer.clear_gradients() # lr.step() # 按照batch进行学习率更新 lr.step() # 按照epoch进行学习率更新

当前学习率策略为: Momentum + CosineAnnealingDecay

Epoch 0: CosineAnnealingDecay set learning rate to 0.001.

Epoch 1: CosineAnnealingDecay set learning rate to 0.0009999921320324326.

Epoch 2: CosineAnnealingDecay set learning rate to 0.0009999685283773503.

Epoch 3: CosineAnnealingDecay set learning rate to 0.000999929189777604.

Epoch 4: CosineAnnealingDecay set learning rate to 0.0009998741174712532.

Epoch 5: CosineAnnealingDecay set learning rate to 0.0009998033131915264.

Epoch 6: CosineAnnealingDecay set learning rate to 0.0009997167791667666.

Epoch 7: CosineAnnealingDecay set learning rate to 0.0009996145181203613.

Epoch 8: CosineAnnealingDecay set learning rate to 0.0009994965332706571.

Epoch 9: CosineAnnealingDecay set learning rate to 0.0009993628283308578.

Epoch 10: CosineAnnealingDecay set learning rate to 0.000999213407508908.

3.3 定义验证函数

# 载入项目文件夹 import sys import numpy as np import paddle import paddle.nn.functional as F from paddle.static import InputSpec def eval(model, data_reader, verbose=0): accuracies_top1 = [] accuracies_top5 = [] losses = [] n_total = 0 for batch_id, (image, label) in enumerate(data_reader): n_batch = len(label) n_total = n_total + n_batch label = paddle.unsqueeze(label, axis=1) loss, acc = model.eval_batch([image], [label]) losses.append(loss[0]) accuracies_top1.append(acc[0][0]*n_batch) accuracies_top5.append(acc[0][1]*n_batch) if verbose == 1: print('\r Batch:{}/{}, acc_top1:[{:.5f}], acc_top5:[{:.5f}]'.format(batch_id+1, len(data_reader), acc[0][0], acc[0][1]), end='') avg_loss = np.sum(losses)/n_total # loss 记录的是当前batch的累积值 avg_acc_top1 = np.sum(accuracies_top1)/n_total # metric 是当前batch的平均值 avg_acc_top5 = np.sum(accuracies_top5)/n_total return avg_loss, avg_acc_top1, avg_acc_top5 ############################################################## if __name__ == '__main__': try: # 设置输入样本的维度 input_spec = InputSpec(shape=[None] + args['input_size'], dtype='float32', name='image') label_spec = InputSpec(shape=[None, 1], dtype='int64', name='label') # 载入模型 network = Alexnet(num_classes=12) model = paddle.Model(network, input_spec, label_spec) # 模型实例化 model.load(deployment_checkpoint_path) # 载入调优模型的参数 model.prepare(loss=paddle.nn.CrossEntropyLoss(), # 设置loss metrics=paddle.metric.Accuracy(topk=(1,5))) # 设置评价指标 # 执行评估函数,并输出验证集样本的损失和精度 print('开始评估...') avg_loss, avg_acc_top1, avg_acc_top5 = eval(model, val_reader(), verbose=1) print('\r [验证集] 损失: {:.5f}, top1精度:{:.5f}, top5精度为:{:.5f} \n'.format(avg_loss, avg_acc_top1, avg_acc_top5), end='') avg_loss, avg_acc_top1, avg_acc_top5 = eval(model, test_reader(), verbose=1) print('\r [测试集] 损失: {:.5f}, top1精度:{:.5f}, top5精度为:{:.5f}'.format(avg_loss, avg_acc_top1, avg_acc_top5), end='') except: print('数据不存在跳过测试')

开始评估...

[验证集] 损失: 0.03308, top1精度:0.32923, top5精度为:0.77231

[测试集] 损失: 0.03435, top1精度:0.34394, top5精度为:0.75909

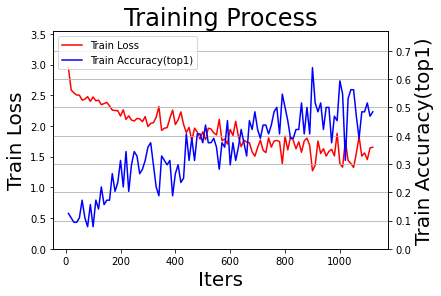

3.3 模型训练及在线测试

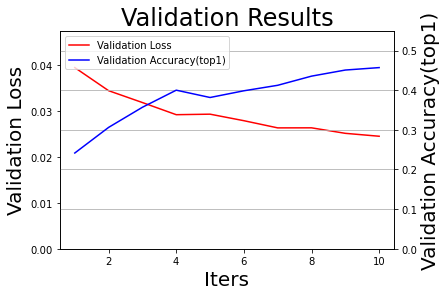

import os import time import json import paddle from paddle.static import InputSpec # 初始配置变量 total_epoch = train_parameters['total_epoch'] # 初始化绘图列表 all_train_iters = [] all_train_losses = [] all_train_accs_top1 = [] all_train_accs_top5 = [] all_test_losses = [] all_test_iters = [] all_test_accs_top1 = [] all_test_accs_top5 = [] def train(model): # 初始化临时变量 num_batch = 0 best_result = 0 best_result_id = 0 elapsed = 0 for epoch in range(1, total_epoch+1): for batch_id, (image, label) in enumerate(train_reader()): num_batch += 1 label = paddle.unsqueeze(label, axis=1) loss, acc = model.train_batch([image], [label]) if num_batch % train_parameters['log_interval'] == 0: # 每10个batch显示一次日志,适合大数据集 avg_loss = loss[0][0] acc_top1 = acc[0][0] acc_top5 = acc[0][1] elapsed_step = time.perf_counter() - elapsed - start elapsed = time.perf_counter() - start logger.info('Epoch:{}/{}, batch:{}, train_loss:[{:.5f}], acc_top1:[{:.5f}], acc_top5:[{:.5f}]({:.2f}s)' .format(epoch, total_epoch, num_batch, loss[0][0], acc[0][0], acc[0][1], elapsed_step)) # 记录训练过程,用于可视化训练过程中的loss和accuracy all_train_iters.append(num_batch) all_train_losses.append(avg_loss) all_train_accs_top1.append(acc_top1) all_train_accs_top5.append(acc_top5) # 每隔一定周期进行一次测试 if epoch % train_parameters['eval_interval'] == 0 or epoch == total_epoch: # 模型校验 avg_loss, avg_acc_top1, avg_acc_top5 = eval(model, val_reader()) logger.info('[validation] Epoch:{}/{}, val_loss:[{:.5f}], val_top1:[{:.5f}], val_top5:[{:.5f}]'.format(epoch, total_epoch, avg_loss, avg_acc_top1, avg_acc_top5)) # 记录测试过程,用于可视化训练过程中的loss和accuracy all_test_iters.append(epoch) all_test_losses.append(avg_loss) all_test_accs_top1.append(avg_acc_top1) all_test_accs_top5.append(avg_acc_top5) # 将性能最好的模型保存为final模型 if avg_acc_top1 > best_result: best_result = avg_acc_top1 best_result_id = epoch # finetune model 用于调优和恢复训练 model.save(os.path.join(checkpoint_models_path, model_name + '_final')) # inference model 用于部署和预测 model.save(os.path.join(final_models_path, model_name + '_final'), training=False) logger.info('已保存当前测试模型(epoch={})为最优模型:{}_final'.format(best_result_id, model_name)) logger.info('最优top1测试精度:{:.5f} (epoch={})'.format(best_result, best_result_id)) logger.info('训练完成,最终性能accuracy={:.5f}(epoch={}), 总耗时{:.2f}s, 已将其保存为:{}_final'.format(best_result, best_result_id, time.perf_counter() - start, model_name)) #### 训练主函数 ########################################################3 if __name__ == '__main__': system_info = json.dumps(getSystemInfo(), indent=4, ensure_ascii=False, sort_keys=False, separators=(',', ':')) logger.info('系统基本信息') logger.info(system_info) # 将此次训练的超参数进行保存 data = json.dumps(train_parameters, indent=4, ensure_ascii=False, sort_keys=False, separators=(',', ':')) # 格式化字典格式的参数列表 logger.info(data) # 启动训练过程 logger.info('训练参数保存完毕,使用{}模型, 训练{}数据, 训练集{}, 启动训练...'.format(train_parameters['architecture'],train_parameters['dataset_name'],train_parameters['training_data'])) logger.info('当前模型目录为:{}'.format(model_name + '_' + train_parameters['starting_time'])) # 设置输入样本的维度 input_spec = InputSpec(shape=[None] + train_parameters['input_size'], dtype='float32', name='image') label_spec = InputSpec(shape=[None, 1], dtype='int64', name='label') # 初始化AlexNet,并进行实例化 network = Alexnet(num_classes=12) model = paddle.Model(network, input_spec, label_spec) logger.info('模型参数信息:') logger.info(model.summary()) # 是否显示神经网络的具体信息 # 设置学习率、优化器、损失函数和评价指标 lr = learning_rate_setting(args=args, argsO=argsLS) optimizer = optimizer_setting(model, lr, argsO=argsLS) model.prepare(optimizer, paddle.nn.CrossEntropyLoss(), paddle.metric.Accuracy(topk=(1,5))) # 启动训练过程 start = time.perf_counter() train(model) logger.info('训练完毕,结果路径{}.'.format(result_root_path)) # 输出训练过程图 logger.info('Done.') draw_process("Training Process", 'Train Loss', 'Train Accuracy(top1)', all_train_iters, all_train_losses, all_train_accs_top1, final_figures_path=final_figures_path, figurename='train', isShow=True) draw_process("Validation Results", 'Validation Loss', 'Validation Accuracy(top1)', all_test_iters, all_test_losses, all_test_accs_top1, final_figures_path=final_figures_path, figurename='val', isShow=True)

INFO 2022-10-09 22:35:55,048 1244777867.py:83] 系统基本信息

INFO 2022-10-09 22:35:55,049 1244777867.py:84] {

"操作系统":"Windows-10-10.0.22000-SP0",

"CPU":"Intel(R) Core(TM) i7-7700K CPU @ 4.20GHz",

"内存":"9.52G/15.96G (59.60%)",

"GPU":"b'GeForce GTX 1080 Ti' 3.46G/11.00G (0.31%)",

"CUDA":"7.6.5",

"cuDNN":"7.6.5",

"Paddle":"2.3.2"

}

INFO 2022-10-09 22:35:55,050 1244777867.py:88] {

"project_name":"Project012AlexNetZodiac",

"dataset_name":"Zodiac",

"architecture":"Alexnet",

"training_data":"train",

"starting_time":"202210092235",

"input_size":[

3,

227,

227

],

"mean_value":[

0.485,

0.456,

0.406

],

"std_value":[

0.229,

0.224,

0.225

],

"num_trainval":7840,

"num_train":7190,

"num_val":650,

"num_test":660,

"class_dim":12,

"label_dict":{

"0":"dog",

"1":"dragon",

"2":"goat",

"3":"horse",

"4":"monkey",

"5":"ox",

"6":"pig",

"7":"rabbit",

"8":"ratt",

"9":"rooster",

"10":"snake",

"11":"tiger"

},

"total_epoch":10,

"batch_size":64,

"log_interval":10,

"eval_interval":1,

"dataset_root_path":"D:\\Workspace\\ExpDatasets\\",

"result_root_path":"D:\\Workspace\\ExpResults\\",

"deployment_root_path":"D:\\Workspace\\ExpDeployments\\",

"useGPU":true,

"learning_strategy":{

"optimizer_strategy":"Momentum",

"learning_rate_strategy":"CosineAnnealingDecay",

"learning_rate":0.001,

"momentum":0.9,

"Piecewise_boundaries":[

60,

80,

90

],

"Piecewise_values":[

0.01,

0.001,

0.0001,

1e-05

],

"Exponential_gamma":0.9,

"Polynomial_decay_steps":10,

"verbose":true

},

"augmentation_strategy":{

"withAugmentation":true,

"augmentation_prob":0.5,

"rotate_angle":15,

"Hflip_prob":0.5,

"brightness":0.4,

"contrast":0.4,

"saturation":0.4,

"hue":0.4

}

}

INFO 2022-10-09 22:35:55,051 1244777867.py:90] 训练参数保存完毕,使用Alexnet模型, 训练Zodiac数据, 训练集train, 启动训练...

INFO 2022-10-09 22:35:55,052 1244777867.py:91] 当前模型目录为:Zodiac_Alexnet_202210092235

INFO 2022-10-09 22:35:55,057 1244777867.py:103] 模型参数信息:

INFO 2022-10-09 22:35:55,062 1244777867.py:104] {'total_params': 58330508, 'trainable_params': 58330508}

---------------------------------------------------------------------------

Layer (type) Input Shape Output Shape Param #

===========================================================================

Conv2D-11 [[1, 3, 227, 227]] [1, 96, 55, 55] 34,944

ReLU-15 [[1, 96, 55, 55]] [1, 96, 55, 55] 0

MaxPool2D-7 [[1, 96, 55, 55]] [1, 96, 27, 27] 0

Conv2D-12 [[1, 96, 27, 27]] [1, 256, 27, 27] 614,656

ReLU-16 [[1, 256, 27, 27]] [1, 256, 27, 27] 0

MaxPool2D-8 [[1, 256, 27, 27]] [1, 256, 13, 13] 0

Conv2D-13 [[1, 256, 13, 13]] [1, 384, 13, 13] 885,120

ReLU-17 [[1, 384, 13, 13]] [1, 384, 13, 13] 0

Conv2D-14 [[1, 384, 13, 13]] [1, 384, 13, 13] 1,327,488

ReLU-18 [[1, 384, 13, 13]] [1, 384, 13, 13] 0

Conv2D-15 [[1, 384, 13, 13]] [1, 256, 13, 13] 884,992

ReLU-19 [[1, 256, 13, 13]] [1, 256, 13, 13] 0

MaxPool2D-9 [[1, 256, 13, 13]] [1, 256, 6, 6] 0

Linear-8 [[1, 9216]] [1, 4096] 37,752,832

ReLU-20 [[1, 4096]] [1, 4096] 0

Dropout-5 [[1, 4096]] [1, 4096] 0

Linear-9 [[1, 4096]] [1, 4096] 16,781,312

ReLU-21 [[1, 4096]] [1, 4096] 0

Dropout-6 [[1, 4096]] [1, 4096] 0

Linear-10 [[1, 4096]] [1, 12] 49,164

===========================================================================

Total params: 58,330,508

Trainable params: 58,330,508

Non-trainable params: 0

---------------------------------------------------------------------------

Input size (MB): 0.59

Forward/backward pass size (MB): 11.04

Params size (MB): 222.51

Estimated Total Size (MB): 234.14

---------------------------------------------------------------------------

当前学习率策略为: Momentum + CosineAnnealingDecay

Epoch 0: CosineAnnealingDecay set learning rate to 0.001.

c:\Users\Administrator\anaconda3\lib\site-packages\paddle\fluid\dygraph\amp\auto_cast.py:301: UserWarning: For float16, amp only support NVIDIA GPU with Compute Capability 7.0 or higher, current GPU is: GeForce GTX 1080 Ti, with Compute Capability: 6.1.

warnings.warn(

INFO 2022-10-09 22:36:04,837 1244777867.py:42] Epoch:1/10, batch:10, train_loss:[2.94980], acc_top1:[0.12500], acc_top5:[0.46875](9.77s)

INFO 2022-10-09 22:36:14,978 1244777867.py:42] Epoch:1/10, batch:20, train_loss:[2.58542], acc_top1:[0.10938], acc_top5:[0.46875](10.14s)

INFO 2022-10-09 22:36:23,816 1244777867.py:42] Epoch:1/10, batch:30, train_loss:[2.53845], acc_top1:[0.09375], acc_top5:[0.39062](8.84s)

INFO 2022-10-09 22:36:32,607 1244777867.py:42] Epoch:1/10, batch:40, train_loss:[2.50344], acc_top1:[0.09375], acc_top5:[0.40625](8.79s)

INFO 2022-10-09 22:36:42,721 1244777867.py:42] Epoch:1/10, batch:50, train_loss:[2.50078], acc_top1:[0.10938], acc_top5:[0.40625](10.11s)

INFO 2022-10-09 22:36:53,049 1244777867.py:42] Epoch:1/10, batch:60, train_loss:[2.42050], acc_top1:[0.17188], acc_top5:[0.53125](10.33s)

INFO 2022-10-09 22:37:01,756 1244777867.py:42] Epoch:1/10, batch:70, train_loss:[2.43635], acc_top1:[0.10938], acc_top5:[0.53125](8.71s)

INFO 2022-10-09 22:37:10,710 1244777867.py:42] Epoch:1/10, batch:80, train_loss:[2.47609], acc_top1:[0.07812], acc_top5:[0.53125](8.95s)

INFO 2022-10-09 22:37:20,661 1244777867.py:42] Epoch:1/10, batch:90, train_loss:[2.40079], acc_top1:[0.15625], acc_top5:[0.56250](9.95s)

INFO 2022-10-09 22:37:29,478 1244777867.py:42] Epoch:1/10, batch:100, train_loss:[2.47170], acc_top1:[0.07812], acc_top5:[0.53125](8.82s)

INFO 2022-10-09 22:37:38,846 1244777867.py:42] Epoch:1/10, batch:110, train_loss:[2.40918], acc_top1:[0.17188], acc_top5:[0.57812](9.37s)

INFO 2022-10-09 22:37:50,665 1244777867.py:55] [validation] Epoch:1/10, val_loss:[0.03938], val_top1:[0.24154], val_top5:[0.63692]

INFO 2022-10-09 22:38:03,840 1244777867.py:73] 已保存当前测试模型(epoch=1)为最优模型:Zodiac_Alexnet_final

INFO 2022-10-09 22:38:03,840 1244777867.py:74] 最优top1测试精度:0.24154 (epoch=1)

INFO 2022-10-09 22:38:10,610 1244777867.py:42] Epoch:2/10, batch:120, train_loss:[2.42001], acc_top1:[0.14062], acc_top5:[0.42188](31.76s)

INFO 2022-10-09 22:38:19,031 1244777867.py:42] Epoch:2/10, batch:130, train_loss:[2.34658], acc_top1:[0.21875], acc_top5:[0.59375](8.42s)

INFO 2022-10-09 22:38:30,389 1244777867.py:42] Epoch:2/10, batch:140, train_loss:[2.36375], acc_top1:[0.15625], acc_top5:[0.57812](11.36s)

INFO 2022-10-09 22:38:40,551 1244777867.py:42] Epoch:2/10, batch:150, train_loss:[2.38448], acc_top1:[0.17188], acc_top5:[0.50000](10.16s)

INFO 2022-10-09 22:38:49,057 1244777867.py:42] Epoch:2/10, batch:160, train_loss:[2.32564], acc_top1:[0.17188], acc_top5:[0.60938](8.51s)

INFO 2022-10-09 22:38:59,495 1244777867.py:42] Epoch:2/10, batch:170, train_loss:[2.25798], acc_top1:[0.26562], acc_top5:[0.71875](10.44s)

INFO 2022-10-09 22:39:08,554 1244777867.py:42] Epoch:2/10, batch:180, train_loss:[2.25164], acc_top1:[0.20312], acc_top5:[0.71875](9.06s)

INFO 2022-10-09 22:39:17,310 1244777867.py:42] Epoch:2/10, batch:190, train_loss:[2.24757], acc_top1:[0.23438], acc_top5:[0.75000](8.76s)

INFO 2022-10-09 22:39:28,379 1244777867.py:42] Epoch:2/10, batch:200, train_loss:[2.16094], acc_top1:[0.31250], acc_top5:[0.65625](11.07s)

INFO 2022-10-09 22:39:37,240 1244777867.py:42] Epoch:2/10, batch:210, train_loss:[2.26197], acc_top1:[0.21875], acc_top5:[0.62500](8.86s)

INFO 2022-10-09 22:39:45,960 1244777867.py:42] Epoch:2/10, batch:220, train_loss:[2.10725], acc_top1:[0.34375], acc_top5:[0.78125](8.72s)

INFO 2022-10-09 22:39:59,213 1244777867.py:55] [validation] Epoch:2/10, val_loss:[0.03436], val_top1:[0.30615], val_top5:[0.72923]

INFO 2022-10-09 22:40:12,486 1244777867.py:73] 已保存当前测试模型(epoch=2)为最优模型:Zodiac_Alexnet_final

INFO 2022-10-09 22:40:12,487 1244777867.py:74] 最优top1测试精度:0.30615 (epoch=2)

INFO 2022-10-09 22:40:18,215 1244777867.py:42] Epoch:3/10, batch:230, train_loss:[2.16434], acc_top1:[0.20312], acc_top5:[0.73438](32.26s)

INFO 2022-10-09 22:40:27,138 1244777867.py:42] Epoch:3/10, batch:240, train_loss:[2.09661], acc_top1:[0.29688], acc_top5:[0.76562](8.92s)

INFO 2022-10-09 22:40:36,326 1244777867.py:42] Epoch:3/10, batch:250, train_loss:[2.08034], acc_top1:[0.34375], acc_top5:[0.78125](9.19s)

INFO 2022-10-09 22:40:46,530 1244777867.py:42] Epoch:3/10, batch:260, train_loss:[2.12270], acc_top1:[0.32812], acc_top5:[0.75000](10.20s)

INFO 2022-10-09 22:40:55,791 1244777867.py:42] Epoch:3/10, batch:270, train_loss:[2.11551], acc_top1:[0.26562], acc_top5:[0.67188](9.26s)

INFO 2022-10-09 22:41:04,988 1244777867.py:42] Epoch:3/10, batch:280, train_loss:[2.06909], acc_top1:[0.28125], acc_top5:[0.68750](9.20s)

INFO 2022-10-09 22:41:13,949 1244777867.py:42] Epoch:3/10, batch:290, train_loss:[2.15314], acc_top1:[0.31250], acc_top5:[0.68750](8.96s)

INFO 2022-10-09 22:41:23,156 1244777867.py:42] Epoch:3/10, batch:300, train_loss:[1.98960], acc_top1:[0.35938], acc_top5:[0.76562](9.21s)

INFO 2022-10-09 22:41:31,576 1244777867.py:42] Epoch:3/10, batch:310, train_loss:[2.04187], acc_top1:[0.37500], acc_top5:[0.82812](8.42s)

INFO 2022-10-09 22:41:41,516 1244777867.py:42] Epoch:3/10, batch:320, train_loss:[2.05257], acc_top1:[0.29688], acc_top5:[0.79688](9.94s)

INFO 2022-10-09 22:41:52,339 1244777867.py:42] Epoch:3/10, batch:330, train_loss:[2.14113], acc_top1:[0.21875], acc_top5:[0.75000](10.82s)

INFO 2022-10-09 22:42:08,748 1244777867.py:55] [validation] Epoch:3/10, val_loss:[0.03178], val_top1:[0.35692], val_top5:[0.77231]

INFO 2022-10-09 22:42:20,110 1244777867.py:73] 已保存当前测试模型(epoch=3)为最优模型:Zodiac_Alexnet_final

INFO 2022-10-09 22:42:20,111 1244777867.py:74] 最优top1测试精度:0.35692 (epoch=3)

INFO 2022-10-09 22:42:23,343 1244777867.py:42] Epoch:4/10, batch:340, train_loss:[2.31590], acc_top1:[0.18750], acc_top5:[0.60938](31.00s)

INFO 2022-10-09 22:42:32,961 1244777867.py:42] Epoch:4/10, batch:350, train_loss:[1.92807], acc_top1:[0.32812], acc_top5:[0.76562](9.62s)

INFO 2022-10-09 22:42:44,485 1244777867.py:42] Epoch:4/10, batch:360, train_loss:[1.96248], acc_top1:[0.31250], acc_top5:[0.81250](11.52s)

INFO 2022-10-09 22:42:53,462 1244777867.py:42] Epoch:4/10, batch:370, train_loss:[1.97263], acc_top1:[0.29688], acc_top5:[0.79688](8.98s)

INFO 2022-10-09 22:43:01,435 1244777867.py:42] Epoch:4/10, batch:380, train_loss:[2.12414], acc_top1:[0.31250], acc_top5:[0.71875](7.97s)

INFO 2022-10-09 22:43:10,319 1244777867.py:42] Epoch:4/10, batch:390, train_loss:[2.25874], acc_top1:[0.18750], acc_top5:[0.67188](8.88s)

INFO 2022-10-09 22:43:21,802 1244777867.py:42] Epoch:4/10, batch:400, train_loss:[2.02269], acc_top1:[0.26562], acc_top5:[0.81250](11.48s)

INFO 2022-10-09 22:43:30,616 1244777867.py:42] Epoch:4/10, batch:410, train_loss:[2.09899], acc_top1:[0.29688], acc_top5:[0.75000](8.81s)

INFO 2022-10-09 22:43:39,345 1244777867.py:42] Epoch:4/10, batch:420, train_loss:[2.22843], acc_top1:[0.23438], acc_top5:[0.68750](8.73s)

INFO 2022-10-09 22:43:48,011 1244777867.py:42] Epoch:4/10, batch:430, train_loss:[2.02532], acc_top1:[0.25000], acc_top5:[0.71875](8.67s)

INFO 2022-10-09 22:43:58,850 1244777867.py:42] Epoch:4/10, batch:440, train_loss:[1.89112], acc_top1:[0.40625], acc_top5:[0.75000](10.84s)

INFO 2022-10-09 22:44:16,017 1244777867.py:55] [validation] Epoch:4/10, val_loss:[0.02914], val_top1:[0.40000], val_top5:[0.82000]

INFO 2022-10-09 22:44:28,494 1244777867.py:73] 已保存当前测试模型(epoch=4)为最优模型:Zodiac_Alexnet_final

INFO 2022-10-09 22:44:28,495 1244777867.py:74] 最优top1测试精度:0.40000 (epoch=4)

INFO 2022-10-09 22:44:30,105 1244777867.py:42] Epoch:5/10, batch:450, train_loss:[1.97591], acc_top1:[0.31250], acc_top5:[0.73438](31.25s)

INFO 2022-10-09 22:44:39,415 1244777867.py:42] Epoch:5/10, batch:460, train_loss:[1.76088], acc_top1:[0.39062], acc_top5:[0.79688](9.31s)

INFO 2022-10-09 22:44:49,862 1244777867.py:42] Epoch:5/10, batch:470, train_loss:[1.96394], acc_top1:[0.31250], acc_top5:[0.81250](10.45s)

INFO 2022-10-09 22:44:58,866 1244777867.py:42] Epoch:5/10, batch:480, train_loss:[1.89846], acc_top1:[0.40625], acc_top5:[0.76562](9.00s)

INFO 2022-10-09 22:45:09,225 1244777867.py:42] Epoch:5/10, batch:490, train_loss:[1.78617], acc_top1:[0.40625], acc_top5:[0.90625](10.36s)

INFO 2022-10-09 22:45:17,611 1244777867.py:42] Epoch:5/10, batch:500, train_loss:[1.90489], acc_top1:[0.37500], acc_top5:[0.85938](8.39s)

INFO 2022-10-09 22:45:26,203 1244777867.py:42] Epoch:5/10, batch:510, train_loss:[1.77656], acc_top1:[0.43750], acc_top5:[0.81250](8.59s)

INFO 2022-10-09 22:45:36,398 1244777867.py:42] Epoch:5/10, batch:520, train_loss:[1.96280], acc_top1:[0.37500], acc_top5:[0.81250](10.19s)

INFO 2022-10-09 22:45:45,136 1244777867.py:42] Epoch:5/10, batch:530, train_loss:[1.95133], acc_top1:[0.37500], acc_top5:[0.75000](8.74s)

INFO 2022-10-09 22:45:53,911 1244777867.py:42] Epoch:5/10, batch:540, train_loss:[1.88728], acc_top1:[0.39062], acc_top5:[0.75000](8.77s)

INFO 2022-10-09 22:46:04,221 1244777867.py:42] Epoch:5/10, batch:550, train_loss:[1.85370], acc_top1:[0.35938], acc_top5:[0.78125](10.31s)

INFO 2022-10-09 22:46:14,477 1244777867.py:42] Epoch:5/10, batch:560, train_loss:[2.10761], acc_top1:[0.28125], acc_top5:[0.71875](10.26s)

INFO 2022-10-09 22:46:24,581 1244777867.py:55] [validation] Epoch:5/10, val_loss:[0.02926], val_top1:[0.38154], val_top5:[0.82000]

INFO 2022-10-09 22:46:24,581 1244777867.py:74] 最优top1测试精度:0.40000 (epoch=4)

INFO 2022-10-09 22:46:33,931 1244777867.py:42] Epoch:6/10, batch:570, train_loss:[1.75981], acc_top1:[0.37500], acc_top5:[0.85938](19.45s)

INFO 2022-10-09 22:46:43,842 1244777867.py:42] Epoch:6/10, batch:580, train_loss:[1.78879], acc_top1:[0.35938], acc_top5:[0.81250](9.91s)

INFO 2022-10-09 22:46:52,488 1244777867.py:42] Epoch:6/10, batch:590, train_loss:[1.70519], acc_top1:[0.45312], acc_top5:[0.87500](8.65s)

INFO 2022-10-09 22:47:01,522 1244777867.py:42] Epoch:6/10, batch:600, train_loss:[1.94305], acc_top1:[0.29688], acc_top5:[0.82812](9.03s)

INFO 2022-10-09 22:47:12,419 1244777867.py:42] Epoch:6/10, batch:610, train_loss:[1.84541], acc_top1:[0.37500], acc_top5:[0.81250](10.90s)

INFO 2022-10-09 22:47:23,651 1244777867.py:42] Epoch:6/10, batch:620, train_loss:[2.07612], acc_top1:[0.31250], acc_top5:[0.71875](11.23s)

INFO 2022-10-09 22:47:33,649 1244777867.py:42] Epoch:6/10, batch:630, train_loss:[1.82666], acc_top1:[0.35938], acc_top5:[0.84375](10.00s)

INFO 2022-10-09 22:47:42,078 1244777867.py:42] Epoch:6/10, batch:640, train_loss:[1.66201], acc_top1:[0.42188], acc_top5:[0.85938](8.43s)

INFO 2022-10-09 22:47:51,509 1244777867.py:42] Epoch:6/10, batch:650, train_loss:[1.76930], acc_top1:[0.37500], acc_top5:[0.85938](9.43s)

INFO 2022-10-09 22:48:00,491 1244777867.py:42] Epoch:6/10, batch:660, train_loss:[1.73668], acc_top1:[0.32812], acc_top5:[0.82812](8.98s)

INFO 2022-10-09 22:48:09,203 1244777867.py:42] Epoch:6/10, batch:670, train_loss:[1.72636], acc_top1:[0.45312], acc_top5:[0.90625](8.71s)

INFO 2022-10-09 22:48:20,550 1244777867.py:55] [validation] Epoch:6/10, val_loss:[0.02784], val_top1:[0.39846], val_top5:[0.84769]

INFO 2022-10-09 22:48:20,550 1244777867.py:74] 最优top1测试精度:0.40000 (epoch=4)

INFO 2022-10-09 22:48:27,431 1244777867.py:42] Epoch:7/10, batch:680, train_loss:[1.57671], acc_top1:[0.42188], acc_top5:[0.90625](18.23s)

INFO 2022-10-09 22:48:36,213 1244777867.py:42] Epoch:7/10, batch:690, train_loss:[1.50755], acc_top1:[0.48438], acc_top5:[0.87500](8.78s)

INFO 2022-10-09 22:48:45,403 1244777867.py:42] Epoch:7/10, batch:700, train_loss:[1.64809], acc_top1:[0.42188], acc_top5:[0.85938](9.19s)

INFO 2022-10-09 22:48:56,197 1244777867.py:42] Epoch:7/10, batch:710, train_loss:[1.76861], acc_top1:[0.39062], acc_top5:[0.84375](10.79s)

INFO 2022-10-09 22:49:06,478 1244777867.py:42] Epoch:7/10, batch:720, train_loss:[1.60422], acc_top1:[0.43750], acc_top5:[0.90625](10.28s)

INFO 2022-10-09 22:49:15,749 1244777867.py:42] Epoch:7/10, batch:730, train_loss:[1.56605], acc_top1:[0.43750], acc_top5:[0.87500](9.27s)

INFO 2022-10-09 22:49:25,592 1244777867.py:42] Epoch:7/10, batch:740, train_loss:[1.80573], acc_top1:[0.40625], acc_top5:[0.85938](9.84s)

INFO 2022-10-09 22:49:35,009 1244777867.py:42] Epoch:7/10, batch:750, train_loss:[1.65568], acc_top1:[0.43750], acc_top5:[0.82812](9.42s)

INFO 2022-10-09 22:49:46,005 1244777867.py:42] Epoch:7/10, batch:760, train_loss:[1.75281], acc_top1:[0.48438], acc_top5:[0.87500](11.00s)

INFO 2022-10-09 22:49:55,049 1244777867.py:42] Epoch:7/10, batch:770, train_loss:[1.76410], acc_top1:[0.50000], acc_top5:[0.87500](9.04s)

INFO 2022-10-09 22:50:03,399 1244777867.py:42] Epoch:7/10, batch:780, train_loss:[1.74479], acc_top1:[0.40625], acc_top5:[0.79688](8.35s)

INFO 2022-10-09 22:50:16,565 1244777867.py:55] [validation] Epoch:7/10, val_loss:[0.02628], val_top1:[0.41231], val_top5:[0.85538]

INFO 2022-10-09 22:50:28,623 1244777867.py:73] 已保存当前测试模型(epoch=7)为最优模型:Zodiac_Alexnet_final

INFO 2022-10-09 22:50:28,623 1244777867.py:74] 最优top1测试精度:0.41231 (epoch=7)

INFO 2022-10-09 22:50:34,013 1244777867.py:42] Epoch:8/10, batch:790, train_loss:[1.38737], acc_top1:[0.54688], acc_top5:[0.95312](30.61s)

INFO 2022-10-09 22:50:43,163 1244777867.py:42] Epoch:8/10, batch:800, train_loss:[1.83281], acc_top1:[0.50000], acc_top5:[0.84375](9.15s)

INFO 2022-10-09 22:50:53,771 1244777867.py:42] Epoch:8/10, batch:810, train_loss:[1.61041], acc_top1:[0.45312], acc_top5:[0.89062](10.61s)

INFO 2022-10-09 22:51:03,034 1244777867.py:42] Epoch:8/10, batch:820, train_loss:[1.81873], acc_top1:[0.39062], acc_top5:[0.89062](9.26s)

INFO 2022-10-09 22:51:12,354 1244777867.py:42] Epoch:8/10, batch:830, train_loss:[1.76452], acc_top1:[0.39062], acc_top5:[0.81250](9.32s)

INFO 2022-10-09 22:51:21,561 1244777867.py:42] Epoch:8/10, batch:840, train_loss:[1.62825], acc_top1:[0.42188], acc_top5:[0.90625](9.21s)

INFO 2022-10-09 22:51:29,305 1244777867.py:42] Epoch:8/10, batch:850, train_loss:[1.74147], acc_top1:[0.42188], acc_top5:[0.84375](7.74s)

INFO 2022-10-09 22:51:39,645 1244777867.py:42] Epoch:8/10, batch:860, train_loss:[1.57180], acc_top1:[0.51562], acc_top5:[0.89062](10.34s)

INFO 2022-10-09 22:51:50,331 1244777867.py:42] Epoch:8/10, batch:870, train_loss:[1.75662], acc_top1:[0.40625], acc_top5:[0.79688](10.69s)

INFO 2022-10-09 22:52:00,194 1244777867.py:42] Epoch:8/10, batch:880, train_loss:[1.80102], acc_top1:[0.50000], acc_top5:[0.79688](9.86s)

INFO 2022-10-09 22:52:08,209 1244777867.py:42] Epoch:8/10, batch:890, train_loss:[1.67884], acc_top1:[0.40625], acc_top5:[0.84375](8.02s)

INFO 2022-10-09 22:52:24,216 1244777867.py:55] [validation] Epoch:8/10, val_loss:[0.02629], val_top1:[0.43538], val_top5:[0.86769]

INFO 2022-10-09 22:52:35,639 1244777867.py:73] 已保存当前测试模型(epoch=8)为最优模型:Zodiac_Alexnet_final

INFO 2022-10-09 22:52:35,642 1244777867.py:74] 最优top1测试精度:0.43538 (epoch=8)

INFO 2022-10-09 22:52:38,571 1244777867.py:42] Epoch:9/10, batch:900, train_loss:[1.26910], acc_top1:[0.64062], acc_top5:[0.90625](30.36s)

INFO 2022-10-09 22:52:48,657 1244777867.py:42] Epoch:9/10, batch:910, train_loss:[1.36239], acc_top1:[0.51562], acc_top5:[0.89062](10.09s)

INFO 2022-10-09 22:52:58,162 1244777867.py:42] Epoch:9/10, batch:920, train_loss:[1.75519], acc_top1:[0.48438], acc_top5:[0.82812](9.51s)

INFO 2022-10-09 22:53:07,209 1244777867.py:42] Epoch:9/10, batch:930, train_loss:[1.55206], acc_top1:[0.51562], acc_top5:[0.96875](9.05s)

INFO 2022-10-09 22:53:16,978 1244777867.py:42] Epoch:9/10, batch:940, train_loss:[1.62840], acc_top1:[0.42188], acc_top5:[0.87500](9.77s)

INFO 2022-10-09 22:53:26,735 1244777867.py:42] Epoch:9/10, batch:950, train_loss:[1.51030], acc_top1:[0.50000], acc_top5:[0.87500](9.76s)

INFO 2022-10-09 22:53:36,479 1244777867.py:42] Epoch:9/10, batch:960, train_loss:[1.58515], acc_top1:[0.50000], acc_top5:[0.89062](9.74s)

INFO 2022-10-09 22:53:46,311 1244777867.py:42] Epoch:9/10, batch:970, train_loss:[1.62021], acc_top1:[0.37500], acc_top5:[0.89062](9.83s)

INFO 2022-10-09 22:53:55,453 1244777867.py:42] Epoch:9/10, batch:980, train_loss:[1.51231], acc_top1:[0.46875], acc_top5:[0.85938](9.14s)

INFO 2022-10-09 22:54:04,390 1244777867.py:42] Epoch:9/10, batch:990, train_loss:[1.87921], acc_top1:[0.45312], acc_top5:[0.85938](8.94s)

INFO 2022-10-09 22:54:12,997 1244777867.py:42] Epoch:9/10, batch:1000, train_loss:[1.38564], acc_top1:[0.59375], acc_top5:[0.92188](8.61s)

INFO 2022-10-09 22:54:30,927 1244777867.py:55] [validation] Epoch:9/10, val_loss:[0.02509], val_top1:[0.45077], val_top5:[0.87231]

INFO 2022-10-09 22:54:42,720 1244777867.py:73] 已保存当前测试模型(epoch=9)为最优模型:Zodiac_Alexnet_final

INFO 2022-10-09 22:54:42,721 1244777867.py:74] 最优top1测试精度:0.45077 (epoch=9)

INFO 2022-10-09 22:54:45,063 1244777867.py:42] Epoch:10/10, batch:1010, train_loss:[1.32912], acc_top1:[0.54688], acc_top5:[0.96875](32.07s)

INFO 2022-10-09 22:54:54,247 1244777867.py:42] Epoch:10/10, batch:1020, train_loss:[1.76405], acc_top1:[0.31250], acc_top5:[0.85938](9.18s)

INFO 2022-10-09 22:55:03,631 1244777867.py:42] Epoch:10/10, batch:1030, train_loss:[1.43765], acc_top1:[0.53125], acc_top5:[0.89062](9.38s)

INFO 2022-10-09 22:55:12,580 1244777867.py:42] Epoch:10/10, batch:1040, train_loss:[1.38902], acc_top1:[0.56250], acc_top5:[0.92188](8.95s)

INFO 2022-10-09 22:55:22,857 1244777867.py:42] Epoch:10/10, batch:1050, train_loss:[1.32393], acc_top1:[0.56250], acc_top5:[0.90625](10.28s)

INFO 2022-10-09 22:55:31,355 1244777867.py:42] Epoch:10/10, batch:1060, train_loss:[1.55648], acc_top1:[0.46875], acc_top5:[0.82812](8.50s)

INFO 2022-10-09 22:55:42,346 1244777867.py:42] Epoch:10/10, batch:1070, train_loss:[1.82418], acc_top1:[0.39062], acc_top5:[0.81250](10.99s)

INFO 2022-10-09 22:55:51,818 1244777867.py:42] Epoch:10/10, batch:1080, train_loss:[1.50884], acc_top1:[0.48438], acc_top5:[0.90625](9.47s)

INFO 2022-10-09 22:56:01,285 1244777867.py:42] Epoch:10/10, batch:1090, train_loss:[1.56120], acc_top1:[0.48438], acc_top5:[0.85938](9.47s)

INFO 2022-10-09 22:56:10,273 1244777867.py:42] Epoch:10/10, batch:1100, train_loss:[1.45238], acc_top1:[0.51562], acc_top5:[0.92188](8.99s)

INFO 2022-10-09 22:56:18,871 1244777867.py:42] Epoch:10/10, batch:1110, train_loss:[1.64210], acc_top1:[0.46875], acc_top5:[0.81250](8.60s)

INFO 2022-10-09 22:56:27,872 1244777867.py:42] Epoch:10/10, batch:1120, train_loss:[1.65337], acc_top1:[0.48438], acc_top5:[0.85938](9.00s)

INFO 2022-10-09 22:56:38,178 1244777867.py:55] [validation] Epoch:10/10, val_loss:[0.02446], val_top1:[0.45692], val_top5:[0.87538]

INFO 2022-10-09 22:56:55,944 1244777867.py:73] 已保存当前测试模型(epoch=10)为最优模型:Zodiac_Alexnet_final

INFO 2022-10-09 22:56:55,945 1244777867.py:74] 最优top1测试精度:0.45692 (epoch=10)

INFO 2022-10-09 22:56:55,945 1244777867.py:77] 训练完成,最终性能accuracy=0.45692(epoch=10), 总耗时1260.88s, 已将其保存为:Zodiac_Alexnet_final

INFO 2022-10-09 22:56:55,946 1244777867.py:117] 训练完毕,结果路径D:\Workspace\ExpResults\Project012AlexNetZodiac.

INFO 2022-10-09 22:56:55,946 1244777867.py:120] Done.

训练完成后,建议将 ExpResults 文件夹的最终文件 copy 到 ExpDeployments 用于进行部署和应用。

3.4 离线测试

if __name__ == '__main__': # 设置输入样本的维度 input_spec = InputSpec(shape=[None] + args['input_size'], dtype='float32', name='image') label_spec = InputSpec(shape=[None, 1], dtype='int64', name='label') # 载入模型 network = Alexnet(num_classes=12) model = paddle.Model(network, input_spec, label_spec) # 模型实例化 model.load(deployment_checkpoint_path) # 载入调优模型的参数 model.prepare(loss=paddle.nn.CrossEntropyLoss(), # 设置loss metrics=paddle.metric.Accuracy(topk=(1,5))) # 设置评价指标 # 执行评估函数,并输出验证集样本的损失和精度 print('开始评估...') avg_loss, avg_acc_top1, avg_acc_top5 = eval(model, val_reader(), verbose=1) print('\r [验证集] 损失: {:.5f}, top1精度:{:.5f}, top5精度为:{:.5f} \n'.format(avg_loss, avg_acc_top1, avg_acc_top5), end='') avg_loss, avg_acc_top1, avg_acc_top5 = eval(model, test_reader(), verbose=1) print('\r [测试集] 损失: {:.5f}, top1精度:{:.5f}, top5精度为:{:.5f}'.format(avg_loss, avg_acc_top1, avg_acc_top5), end='')

开始评估...

[验证集] 损失: 0.03308, top1精度:0.32923, top5精度为:0.77231

[测试集] 损失: 0.03435, top1精度:0.34394, top5精度为:0.75909

【结果分析】

需要注意的是此处的精度与训练过程中输出的测试精度是不相同的,因为训练过程中使用的是验证集, 而这里的离线测试使用的是测试集.

【实验四】 模型推理和预测(应用)

实验摘要: 对训练过的模型,我们通过测试集进行模型效果评估,并可以在实际场景中进行预测,查看模型的效果。

实验目的:

- 学会使用部署和推理模型进行测试

- 学会对测试样本使用

基本预处理方法和十重切割对样本进行预处理 - 对于测试样本,能够实现批量测试test()和单样本推理predict()

4.1 导入依赖库及全局参数配置

# 导入依赖库 import numpy as np import random import os import cv2 import json import matplotlib.pyplot as plt import paddle import paddle.nn.functional as F args={ 'project_name': 'Project012AlexNetZodiac', 'dataset_name': 'Zodiac', 'architecture': 'Alexnet', 'input_size': [227, 227, 3], 'mean_value': [0.485, 0.456, 0.406], # Imagenet均值 'std_value': [0.229, 0.224, 0.225], # Imagenet标准差 'dataset_root_path': 'D:\\Workspace\\ExpDatasets\\', 'result_root_path': 'D:\\Workspace\\ExpResults\\', 'deployment_root_path': 'D:\\Workspace\\ExpDeployments\\', } model_name = args['dataset_name'] + '_' + args['architecture'] deployment_final_models_path = os.path.join(args['deployment_root_path'], args['project_name'], 'final_models', model_name + '_final') dataset_root_path = os.path.join(args['dataset_root_path'], args['dataset_name']) json_dataset_info = os.path.join(dataset_root_path, 'dataset_info.json')

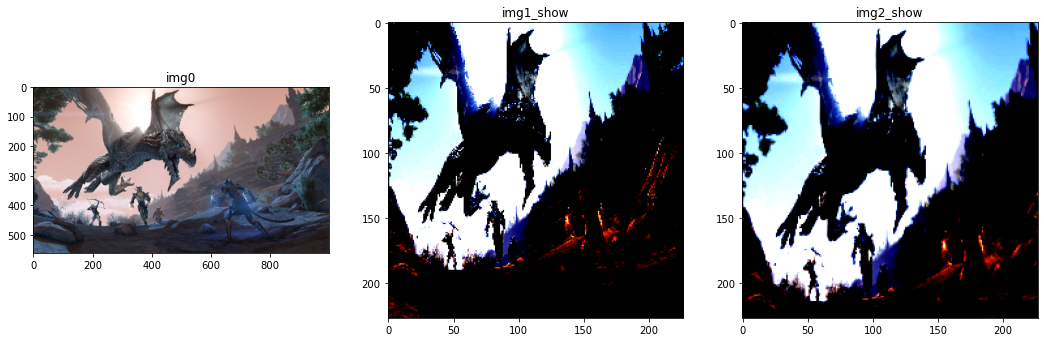

4.2 定义推理时的预处理函数

import paddle import paddle.vision.transforms as T # 2. 用于测试的十重切割 def TenCrop(img, crop_size=args['input_size'][0]): # input_data: Height x Width x Channel img_size = 256 img = T.functional.resize(img, (img_size, img_size)) data = np.zeros([10, crop_size, crop_size, 3], dtype=np.uint8) # 获取左上、右上、左下、右下、中央,及其对应的翻转,共计10个样本 data[0] = T.functional.crop(img,0,0,crop_size,crop_size) data[1] = T.functional.crop(img,0,img_size-crop_size,crop_size,crop_size) data[2] = T.functional.crop(img,img_size-crop_size,0,crop_size,crop_size) data[3] = T.functional.crop(img,img_size-crop_size,img_size-crop_size,crop_size,crop_size) data[4] = T.functional.center_crop(img, crop_size) data[5] = T.functional.hflip(data[0, :, :, :]) data[6] = T.functional.hflip(data[1, :, :, :]) data[7] = T.functional.hflip(data[2, :, :, :]) data[8] = T.functional.hflip(data[3, :, :, :]) data[9] = T.functional.hflip(data[4, :, :, :]) return data # 3. 对于单幅图片(十重切割)所使用的数据预处理,包括均值消除,尺度变换 def SimplePreprocessing(image, input_size = args['input_size'][0:2], isTenCrop = True): image = cv2.resize(image, input_size) image = cv2.cvtColor(image, cv2.COLOR_BGR2RGB) transform = T.Compose([ T.ToTensor(), T.Normalize(mean=args['mean_value'], std=args['std_value']) ]) if isTenCrop: fake_data = np.zeros([10, 3, input_size[0], input_size[1]], dtype=np.float32) fake_blob = TenCrop(image) for i in range(10): fake_data[i] = transform(fake_blob[i]).numpy() else: fake_data = transform(image) return fake_data ############################################################## # 测试输入数据类:分别输出进行预处理和未进行预处理的数据形态和例图 if __name__ == "__main__": img_path = 'D:\\Workspace\\ExpDatasets\\Zodiac\\test\\dragon\\00000039.jpg' img0 = cv2.imread(img_path, 1) img1 = SimplePreprocessing(img0, isTenCrop=False) img2 = SimplePreprocessing(img0, isTenCrop=True) print('原始图像的形态为: {}'.format(img0.shape)) print('简单预处理后(经过十重切割后): {}'.format(img1.shape)) print('简单预处理后(未经过十重切割后) {}'.format(img2.shape)) img1_show = img1.transpose((1, 2, 0)) img2_show = img2[0].transpose((1, 2, 0)) plt.figure(figsize=(18, 6)) ax0 = plt.subplot(1,3,1) ax0.set_title('img0') plt.imshow(img0) ax1 = plt.subplot(1,3,2) ax1.set_title('img1_show') plt.imshow(img1_show) ax2 = plt.subplot(1,3,3) ax2.set_title('img2_show') plt.imshow(img2_show) plt.show()

原始图像的形态为: (563, 1000, 3)

简单预处理后(经过十重切割后): [3, 227, 227]

简单预处理后(未经过十重切割后) (10, 3, 227, 227)

################################################# # 修改者: Xinyu Ou (http://ouxinyu.cn) # 功能: 使用部署模型对测试集进行评估 # 基本功能: # 1. 使用部署模型在测试集上进行批量预测,并输出预测结果 # 2. 使用部署模型在测试集上进行单样本预测,并对预测结果和真实结果进行对比 ################################################# # 1. 使用部署模型在测试集上进行准确度评估 def test(model, data_reader): accs = [] n_total = 0 for batch_id, (image, label) in enumerate(data_reader): n_batch = len(label) n_total = n_total + n_batch # 将label扩展为规定的np矩阵 label = paddle.unsqueeze(label, axis=1) logits = model(image) pred = F.softmax(logits) acc = paddle.metric.accuracy(pred, label) accs.append(acc.numpy()*n_batch) avg_acc = np.sum(accs)/n_total print('测试集的精确度: {:.5f}'.format(avg_acc)) # 2. 使用部署模型在测试集上进行单样本预测 def predict(model, image): # Q6. 完成下列数据推理部分predic()函数的代码 # [Your codes 8] isTenCrop = False image = SimplePreprocessing(image, isTenCrop=isTenCrop) print(image.shape) if isTenCrop: logits = model(image) pred = F.softmax(logits) pred = np.mean(pred.numpy(), axis=0) else: image = paddle.unsqueeze(image, axis=0) logits = model(image) pred = F.softmax(logits) pred_id = np.argmax(pred) return pred_id ############################################################## if __name__ == '__main__': # 0. 载入模型 model = paddle.jit.load(deployment_final_models_path) # 1. 计算测试集的准确度 # test(model, test_reader()) # 2. 输出单个样本测试结果 # 2.1 获取待预测样本的标签信息 with open(json_dataset_info, 'r') as f_info: dataset_info = json.load(f_info) # 2.2 从测试列表中随机选择一副图像 test_list = os.path.join(dataset_root_path, 'test.txt') with open(test_list, 'r') as f_test: lines = f_test.readlines() line = random.choice(lines) img_path, label = line.split() img_path = os.path.join(dataset_root_path, 'Data', img_path) # img_path = 'D:\\Workspace\\ExpDatasets\\Butterfly\\Data\\zebra\\zeb033.jpg' image = cv2.imread(img_path, 1) # 2.4 给出待测样本的类别 pred_id = predict(model, image) # # 将预测的label和ground_turth label转换为label name label_name_gt = dataset_info['label_dict'][str(label)] label_name_pred = dataset_info['label_dict'][str(pred_id)] print('待测样本的类别为:{}, 预测类别为:{}'.format(label_name_gt, label_name_pred)) # 2.5 显示待预测样本 image_rgb = cv2.cvtColor(image, cv2.COLOR_BGR2RGB) plt.imshow(image_rgb) plt.show()

[3, 227, 227]

待测样本的类别为:horse, 预测类别为:dog